Video to Text Description Using Deep Learning and Transformers | COOT

Last Updated on July 21, 2023 by Editorial Team

Author(s): Louis Bouchard

Originally published on Towards AI.

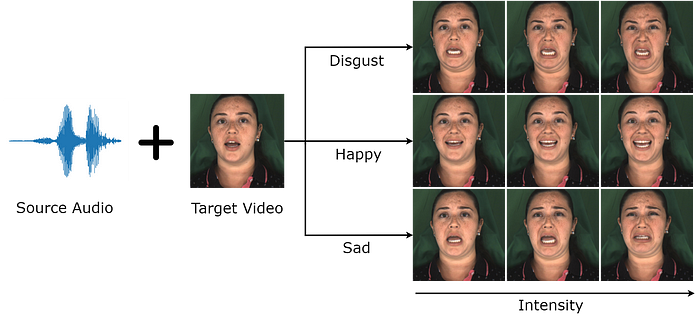

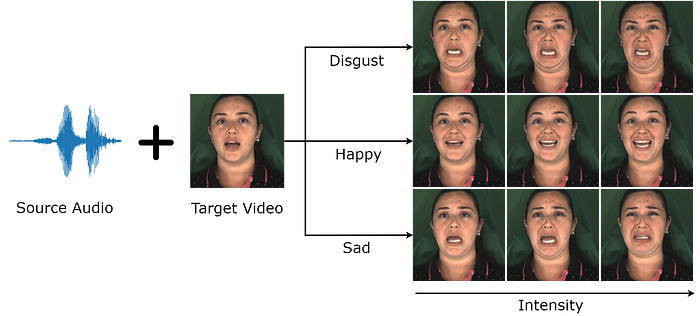

This new model published in the NeurIPS2020 conference uses transformers to generate accurate text descriptions for each sequence of a video, using both the video and a general description of it as inputs.

As many of you guys may already know, we are approaching the date of the Neural Information Processing Systems conference, also referred to as the NeurIPS conference. Where many awesome papers will become publicly available and shared with a wider audience. I will certainly be covering the most interesting ones, just like the one in this video.

Which is called COOT: Cooperative Hierarchical Transformer for Video-Text Representation Learning. As the name states, it uses transformers to generate accurate text descriptions for each sequence of a video, using both the video and a general description of it as inputs.

Before continuing, if you… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Logo:

Logo:  Areas Served:

Areas Served: